The Token Economy: Why High-Fidelity Data is the New Gold

The Token Economy: Why High-Fidelity Data is the New Gold

The Educational Deep Dive

In our previous deep dive, we analyzed The Ingestion Bottleneck - the physical struggle AI agents face when parsing noisy web structures. Today, we look at the financial and computational reality behind that struggle: The Token Economy. In the era of generative search, your website is no longer just a collection of pages; it is a data bill presented to a machine. If your brand is "expensive" to synthesize, you are effectively invisible.

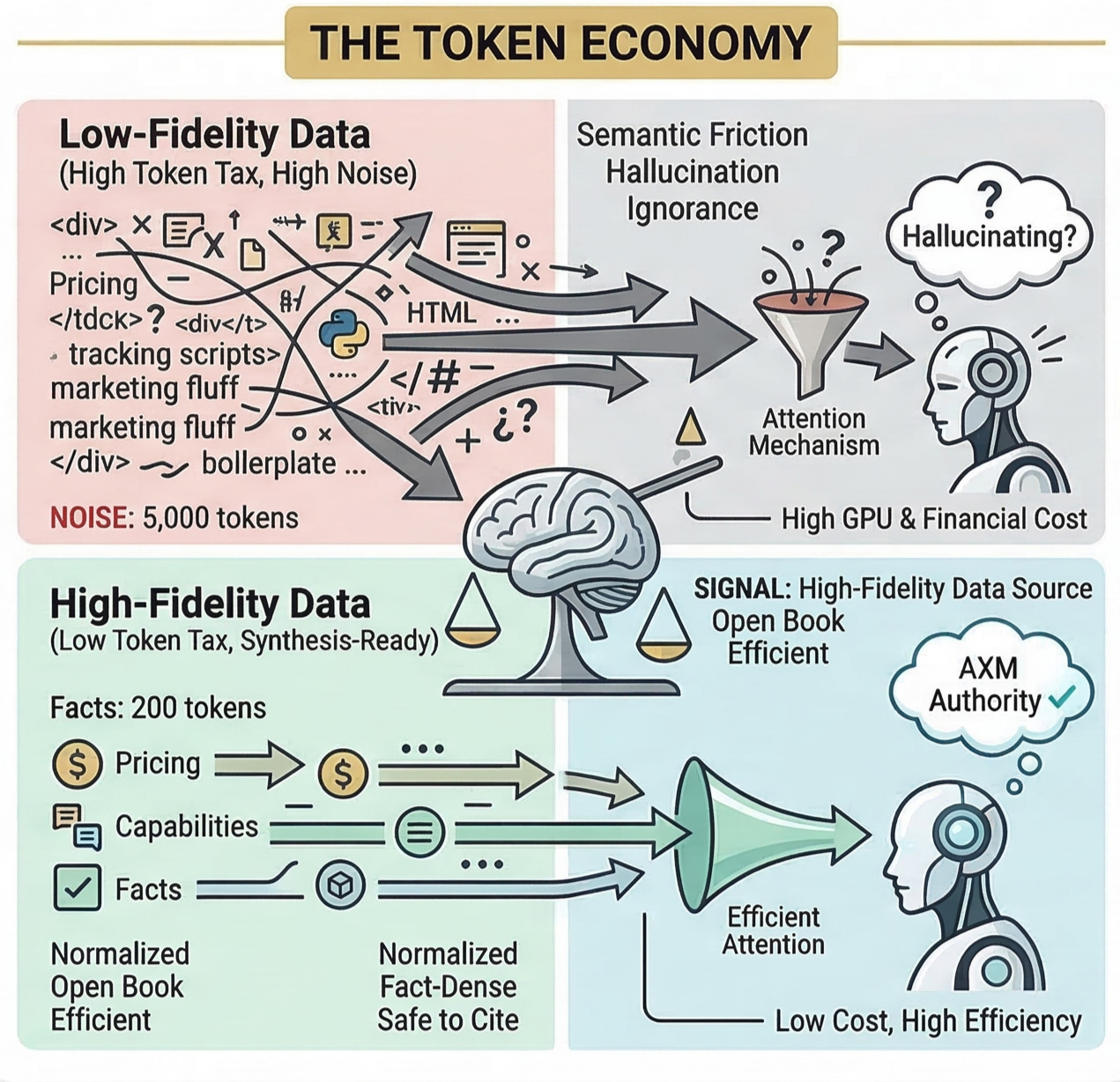

1. The "Token Tax": Understanding the Hidden Cost

Large Language Models (LLMs) do not read words; they process tokens. For AI providers like OpenAI, Anthropic, or Google, processing these tokens costs real money and massive GPU resources.

When an agent visits a typical modern website, it encounters a massive disproportion between signal and noise.

- The Noise: 5,000 tokens of boilerplate HTML, nested

<div>tags, tracking scripts, and redundant CSS. - The Signal: 200 tokens of actual facts about your product.

In this scenario, you are imposing a 96% Token Tax on the agent. Because AI models have a limited context window (their working memory), wasting that space on noise means there is less room for the agent to actually think about your brand.

2. Attention as Scarcity: Why Brevity is Authority

The heart of the Transformer architecture (the "T" in GPT) is the attention mechanism. It determines which parts of the input are important.

When you serve a noisy site, you are forcing the model to divide its attention across thousands of irrelevant tokens. This creates semantic friction, which leads to two outcomes:

- Hallucination: The model loses track of the facts and begins to fill in the gaps with its own inference.

- Ignorance: To save costs, the AI provider may prioritize cheaper, more efficient sources over your bloated site.

The AXM Rule: In the token economy, information density is the ultimate trust signal. High-fidelity data is data that delivers the maximum amount of truth with the minimum amount of computational effort.

3. Measuring the Efficiency Gap (Eg), Revisited

To manage your brand in this new economy, you must master your Efficiency Gap (Eg). It is the ratio of actionable insights to the total payload an agent must ingest:

A high Eg score means your site is an open book for AI: cheap to read, easy to understand, and safe to cite. This is what we define as a high-fidelity data source.

4. Building for the Machine Mind: The Shift to Synthesis-Ready

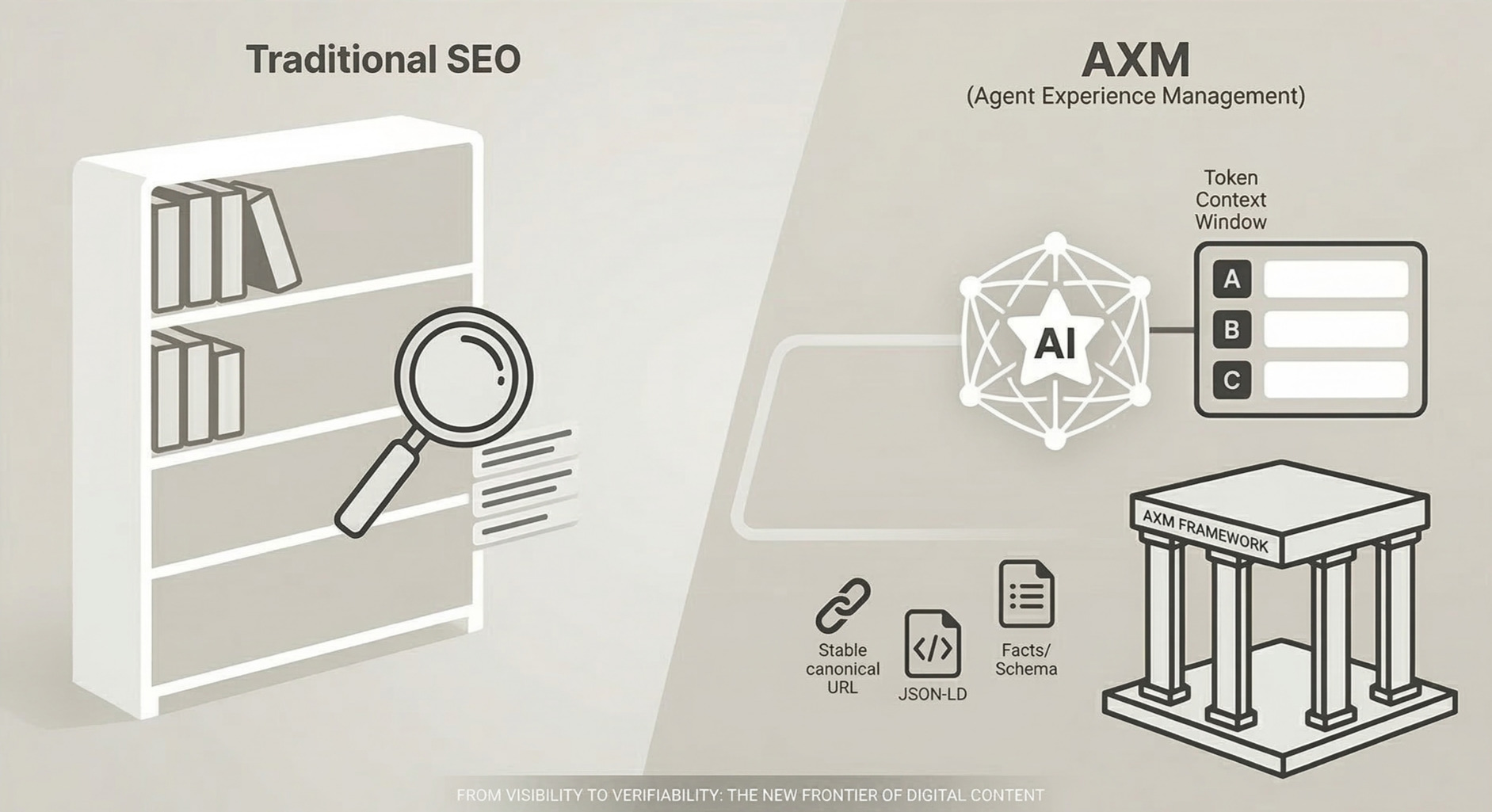

Transitioning to an AXM-first strategy does not mean deleting your beautiful UI. It means providing a parallel reality for machines.

The goal is to serve what we call the Perfect Version - a representation of your content that is:

- Normalized: Free of CMS-specific bloat.

- Context-Optimized: Structured specifically for how LLMs reason through hierarchies.

- Fact-Dense: Prioritizing concrete attributes (pricing, capabilities, constraints) over marketing fluff.

By lowering the token tax, you are not just saving the AI company money; you are buying your way into the agent's inclusion rate. You become the most efficient choice for a machine that needs to provide a fast, accurate, and cheap answer to a user.

Conclusion: Value per Byte

The generative web is moving toward a value-per-byte model. Traditional SEO focused on volume; AXM focuses on purity. If your digital presence is a high-fidelity data source, agents will treat it as definitive truth. If it behaves like a cluttered brochure, it will be treated as noise.

SEO got you into the library. AXM makes you the only source the researcher can afford to quote.

What's Coming Next

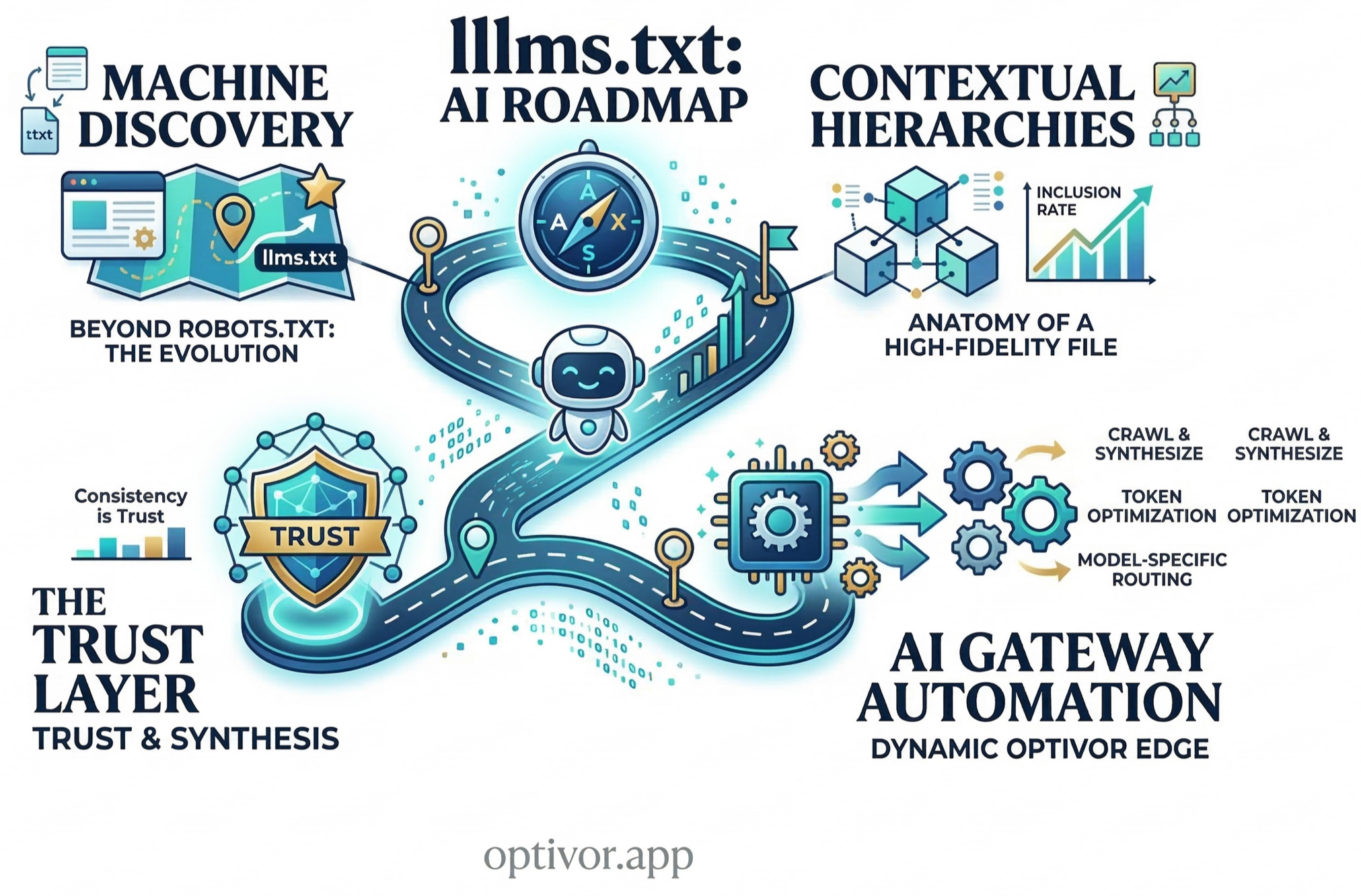

We have discussed the currency (tokens). Now, let us look at the roadmap.

The llms.txt Manifesto - Building the Ultimate Roadmap for AI Models.

We will explore why this emerging standard is the new robots.txt and how to architect it for maximum ingestion.

Minimize the tax. Be the answer.